Replication and Distributed Data: How Databases Scale and Stay Available

Introduction

A single database server can only do so much. At some point, one machine isn't fast enough, isn't reliable enough, or isn't close enough to your users. That's when replication enters the picture.

Replication is the process of keeping copies of your data on multiple machines. It buys you three things: availability (if one machine goes down, others continue serving), read scalability (spread read traffic across many servers), and lower latency (serve users from a server that's physically closer to them).

But replication is never free. Every replication strategy makes a tradeoff between consistency, availability, and complexity. This post walks through all of them.

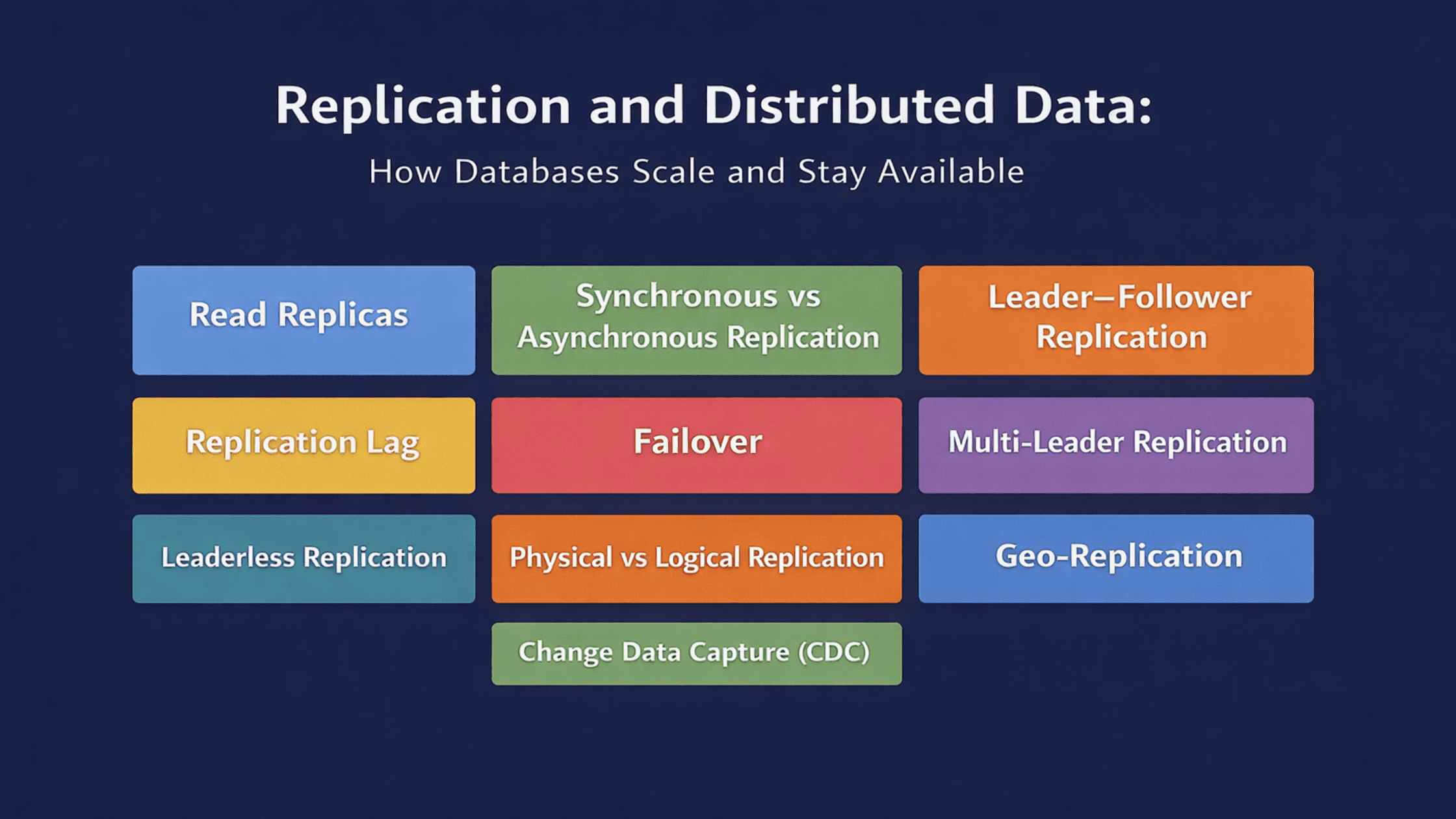

Topics covered:

- Read replicas — offloading reads from the primary

- Synchronous vs asynchronous replication — the durability vs performance tradeoff

- Leader–follower replication — the foundation of most replication systems

- Replication lag — the hidden cost of async replication

- Failover — what happens when the leader dies

- Multi-leader replication — write to multiple nodes, deal with conflicts

- Leaderless replication — no leader at all, quorum-based writes

- Physical vs logical replication — copying bytes vs copying changes

- Geo-replication — data across regions

- CDC (Change Data Capture) — reacting to database changes in real time

Section 1 — The Foundation: Read Replicas

🔹 Read Replicas

Simple Explanation

A read replica is a copy of your database that serves read queries. Writes still go to the primary (main) database. The primary then propagates changes to its replicas. Reads are spread across replicas, reducing load on the primary.

This is the most common first step when a database starts struggling under load — and the most commonly misunderstood one.

Analogy

A busy reference library has one main librarian who updates the catalogue and one who adds new books. To handle the crowd of people just looking things up, the library makes several photocopied catalogues and stations additional librarians around the room. Readers go to any of them. Only the main librarian makes updates. That's read replicas.

Mini Diagram

All writes → [Primary DB]

↓ replication

┌────────┼────────┐

[Replica 1] [Replica 2] [Replica 3]

↑ ↑ ↑

Read traffic distributed across replicasPostgreSQL setup

PostgreSQL supports read replicas natively through streaming replication. The replica runs in standby mode and continuously applies WAL records from the primary.

-- On the primary: check connected replicas

SELECT client_addr, state, sent_lsn, replay_lsn

FROM pg_stat_replication;

-- On the replica: check replication status

SELECT now() - pg_last_xact_replay_timestamp() AS replication_lag;MongoDB setup

In MongoDB, read replicas are part of a replica set — a group of MongoDB instances that maintain the same data. By default all reads go to the primary. To route reads to secondaries:

// Read from secondary (may return slightly stale data)

db.collection('products').find({}).readPreference('secondary');

// Read from secondary only if primary is unavailable

db.collection('products').find({}).readPreference('secondaryPreferred');The catch most engineers miss

Replicas can be behind. A write to the primary may not appear on replicas immediately. If your application reads from a replica right after writing to the primary, it might not see its own write. This is replication lag — and it's not a bug, it's expected behaviour of async replication.

✅ Good use cases for read replicas:

- Analytics queries that are expensive and read-only

- Product browsing, search, dashboards — data that's OK to be slightly stale

- Background reporting jobs

❌ Bad use cases:

- Reading immediately after writing (e.g., confirm a payment then show the updated balance)

- Inventory checks where stale data means overselling

- Any operation where the user expects to see what they just submitted

Section 2 — Synchronous vs Asynchronous Replication

Before going further, this distinction matters for everything else in the article.

🔹 Synchronous Replication

The primary waits for at least one replica to confirm it has received and applied the write before acknowledging success to the client. No data can be lost — if the primary crashes immediately after the write, the replica has the data.

Client → write → Primary

Primary → sends change to Replica

Replica → confirms receipt

Primary → acknowledges success to client ✓The cost: every write now depends on the round-trip to a replica. If the replica is slow or the network is congested, your writes slow down. If the replica goes down entirely, the primary may block writes until it comes back or you intervene.

PostgreSQL synchronous replication:

-- postgresql.conf

synchronous_commit = on

synchronous_standby_names = 'replica1'With this setting, COMMIT doesn't return to the client until replica1 has confirmed the WAL record. Full durability across two nodes.

🔹 Asynchronous Replication

The primary writes and immediately acknowledges success to the client. It then propagates the change to replicas in the background. Replicas may be slightly behind — this gap is replication lag.

Client → write → Primary

Primary → acknowledges success to client ✓ (immediately)

Primary → sends change to Replica (background, may be delayed)The tradeoff: writes are fast, but if the primary crashes before the replica catches up, those recent writes are lost. The replica becomes the new primary with missing data.

Most real-world systems use asynchronous replication because the latency impact of synchronous replication is unacceptable at scale or across geographies.

🔹 Semi-Synchronous Replication

A middle ground: the primary waits for at least one replica to confirm before acknowledging, but doesn't require all replicas to confirm. You get durability on one additional node without waiting for every replica.

MySQL supports this natively. PostgreSQL achieves it with synchronous_standby_names specifying a subset of replicas.

Section 3 — Leader–Follower Replication

🔹 Leader–Follower Replication

Simple Explanation

Leader–follower (also called primary–replica or master–slave) is the foundational replication model. One node — the leader — is the single source of truth for writes. Every write goes there first. All other nodes — followers — receive a copy of every write from the leader and apply it to their own local storage.

Reads can be served by either the leader or followers. Writes only go to the leader.

Analogy

A newspaper has one editor-in-chief who approves every article before it's published. Copies of the newspaper are then distributed to newsstands across the city. Readers get the news from their nearest newsstand. But everything that goes into the newspaper comes from one source of truth — the editor.

Mini Diagram

Client writes → [Leader]

↓ replication log

[Follower 1] [Follower 2] [Follower 3]

↑ ↑ ↑

Client reads from any follower or leaderHow PostgreSQL implements this

PostgreSQL uses WAL-based streaming replication. The leader writes to its WAL. A WAL sender process streams those WAL records to replica WAL receiver processes. Each replica replays the WAL records to catch up.

# On the primary: pg_hba.conf entry for replica connection

host replication replicator 10.0.0.2/32 md5

# On the replica: postgresql.conf

primary_conninfo = 'host=10.0.0.1 port=5432 user=replicator'How MongoDB implements this

MongoDB uses replica sets — typically one primary and two or more secondaries. The primary writes to its oplog (operations log). Secondaries continuously read the oplog and apply the same operations to their own data.

// Initiate a replica set

rs.initiate({

_id: "myReplicaSet",

members: [

{ _id: 0, host: "db1:27017" }, // primary (elected)

{ _id: 1, host: "db2:27017" }, // secondary

{ _id: 2, host: "db3:27017" } // secondary

]

})Limitations of leader–follower

The leader is a bottleneck for writes. All write traffic converges on one machine. If your write volume grows beyond what one machine can handle, leader–follower doesn't help — you need sharding or a different replication model.

The leader is also a single point of failure for writes. If it goes down, no writes can be accepted until a new leader is elected (failover). Reads from followers continue working, but writes stop.

Section 4 — Replication Lag

🔹 Replication Lag

Simple Explanation

Replication lag is the delay between a write being committed on the leader and that write being visible on a follower. In async replication, followers are always slightly behind. How far behind depends on network speed, follower load, and write volume.

Lag is measured in time (milliseconds or seconds) or in log position (bytes behind the leader's write position).

Why lag causes real bugs

Three specific problems arise from replication lag:

1. Read-your-own-writes violation

User submits a form. Data is written to the leader. The confirmation page reads from a replica that hasn't caught up yet. The user sees "no data found" or the old version of their data. They think their submission was lost.

Fix: route reads to the leader for a period after a write from the same user, or use the session-level guarantee ("read your own writes" consistency) — read from the leader until the replica has caught up to the point of that write.

2. Monotonic reads violation

User makes two reads. The first hits a replica with 100ms lag. The second hits a replica with 500ms lag. The second read shows older data than the first. Data appears to go backwards.

Fix: route a given user's reads to the same replica consistently (sticky sessions per user).

3. Consistent prefix reads violation

In a multi-statement sequence — "balance was $1000, then $500 was transferred, now it's $500" — a replica might show the final state without the intermediate steps, or show the steps out of order.

Fix: ensure causally related writes are read from the same replica or use a higher consistency model.

Monitoring lag in PostgreSQL

-- On the primary: see how far each replica is behind

SELECT

client_addr,

state,

sent_lsn,

replay_lsn,

(sent_lsn - replay_lsn) AS bytes_behind

FROM pg_stat_replication;

-- On the replica: lag in seconds

SELECT EXTRACT(EPOCH FROM (now() - pg_last_xact_replay_timestamp())) AS lag_seconds;Monitoring lag in MongoDB

// Check replica set status including replication lag

rs.status()

// Look at: members[n].optimeDate vs primary optimeDate

// The difference is the lagSection 5 — Failover

🔹 Failover

Simple Explanation

Failover is what happens when the leader fails. A follower must be promoted to become the new leader so writes can resume. This can happen manually (a human decides which follower to promote) or automatically (the system detects leader failure and elects a new one).

The steps in a typical automatic failover

1. Leader stops responding (crash, network partition, etc.)

2. Followers detect the failure (no heartbeat from leader)

3. Followers run an election to choose a new leader

→ Usually the follower with the most up-to-date data wins

4. New leader starts accepting writes

5. Other followers switch to replicating from new leader

6. Old leader (if it recovers) must recognise it's no longer leader

and become a follower of the new leaderPostgreSQL failover

PostgreSQL doesn't include automatic failover out of the box. Tools like Patroni, repmgr, or pg_auto_failover handle it. Patroni uses a distributed consensus store (etcd, Consul, or ZooKeeper) to coordinate leader election and prevent split-brain.

# Patroni config example

postgresql:

parameters:

wal_level: replica

hot_standby: "on"

max_wal_senders: 5

bootstrap:

dcs:

ttl: 30

loop_wait: 10

retry_timeout: 10

maximum_lag_on_failover: 1048576 # 1MB — don't promote a replica more than 1MB behindMongoDB failover

MongoDB handles failover automatically within a replica set. Secondaries detect a primary failure via heartbeats (every 2 seconds). After 10 seconds without a heartbeat, an election starts. The secondary with the most up-to-date oplog and the highest priority wins.

// Force an election manually

rs.stepDown() // primary steps down, triggers election

// Check who's primary now

rs.status().members.filter(m => m.stateStr === 'PRIMARY')The split-brain problem

The most dangerous failover scenario: the old leader doesn't know it's been replaced. Network partition causes followers to elect a new leader, but the old leader is still running and accepting writes. Now you have two leaders — a split-brain — and both are taking writes that conflict with each other.

Prevention: use a consensus protocol (Raft, Paxos) that requires a quorum of nodes to agree before a new leader is elected. If the old leader can't reach a quorum, it steps down. If the new leader can't reach a quorum, it doesn't get elected. Only one leader at a time can hold a quorum.

MongoDB's replica set election uses a Raft-inspired protocol. PostgreSQL with Patroni uses etcd or Consul for quorum-based coordination.

Section 6 — Multi-Leader Replication

🔹 Multi-Leader Replication

Simple Explanation

Multi-leader replication allows more than one node to accept writes. Each leader replicates its writes to all other leaders (and their followers). This removes the single-write-bottleneck of leader–follower and allows writes to continue even when some leaders are unreachable.

The price: when two leaders accept writes to the same data at the same time, you have a conflict. Someone has to resolve it.

Analogy

Two branch offices of the same bank both accept customer account updates during the day. At end of day, they sync. Customer A went into both branches and made changes to the same account from each. Now the branches have conflicting records. Who's right? How do you merge?

Mini Diagram

Client in Asia → writes to [Leader Asia]

Client in Europe → writes to [Leader Europe]

[Leader Asia] ↔ [Leader Europe] (async sync)

Conflict scenario:

Leader Asia: user.name = "Alice M."

Leader Europe: user.name = "Alice Morgan"

→ Which one wins?Conflict resolution strategies

Last Write Wins (LWW): the write with the latest timestamp wins. Simple, but clocks across distributed systems are unreliable — a write with a slightly earlier timestamp from a node with a fast clock "wins" even if it was logically later.

Application-level merge: the application receives both conflicting values and decides. Used in CRDTs (Conflict-free Replicated Data Types) and collaborative editors like Google Docs.

Deterministic conflict resolution: always pick the value from the leader with the highest ID, or the write with the largest value. Consistent but potentially wrong for the business logic.

Where multi-leader is used

Multi-leader is common in multi-region deployments where network latency between regions makes synchronous single-leader replication impractical. CouchDB, Google Spanner (with caveats), and many custom distributed systems use multi-leader models.

PostgreSQL doesn't support multi-leader natively. BDR (Bi-Directional Replication) from EDB adds multi-master capability. For MongoDB, multi-region active-active writes require careful application design — MongoDB's native multi-region support is active-passive (one primary region), not active-active.

✅ Use multi-leader when:

- You need writes to continue if a region goes down

- Write latency matters and users are globally distributed

- You can handle conflict resolution complexity

❌ Avoid when:

- Data integrity is non-negotiable and conflicts are unacceptable

- Your team doesn't have the capacity to design robust conflict resolution

Section 7 — Leaderless Replication

🔹 Leaderless Replication

Simple Explanation

Leaderless replication has no designated leader. Any node can accept writes. The system uses quorum-based reads and writes to maintain consistency: a write must be confirmed by a certain number of nodes (write quorum) and a read must query a certain number of nodes (read quorum) for the result to be considered valid.

The quorum formula

If your cluster has N nodes, and you require W nodes to confirm a write and R nodes to respond to a read, then reads and writes are consistent when:

W + R > NFor example, with N=3:

- W=2, R=2: W + R = 4 > 3 ✓ — reads and writes are consistent

- W=1, R=1: W + R = 2 < 3 ✗ — stale reads are possible

Mini Diagram

N=3 nodes, W=2, R=2

Write "name=Alice":

→ Node 1: written ✓

→ Node 2: written ✓

→ Node 3: failed ✗

→ 2/3 confirmed → write succeeds (W=2 met)

Read:

→ Node 1: Alice ✓

→ Node 2: Alice ✓

→ 2/3 responded → result = Alice ✓ (R=2 met, both nodes have latest value)

Cassandra's implementation

Cassandra is the most widely used leaderless database. Consistency is tunable per query:

-- Strongest consistency: all replicas must respond

SELECT * FROM users WHERE id = 1 USING CONSISTENCY ALL;

-- Balanced: majority must respond (most common for production)

SELECT * FROM users WHERE id = 1 USING CONSISTENCY QUORUM;

-- Fastest: one replica responds (weakest consistency)

SELECT * FROM users WHERE id = 1 USING CONSISTENCY ONE;Cassandra uses a technique called read repair — when a read detects that a node has stale data, it sends the latest value back to that node in the background. Hinted handoff handles the case where a node was down during a write — the write is stored on another node as a "hint" and forwarded when the node comes back.

When to use leaderless

Leaderless replication is excellent for write-heavy, globally distributed systems that can tolerate eventual consistency. Cassandra, DynamoDB, and Riak are built on this model. It provides high availability and horizontal write scalability — adding nodes increases both storage and write throughput.

The tradeoff: you give up strong consistency by default. Quorum reads are consistent but not serializable. Write conflicts are possible and require resolution strategies (usually last-write-wins in Cassandra).

Section 8 — Physical vs Logical Replication

🔹 Physical Replication

Simple Explanation

Physical replication copies the actual raw bytes of the database files — disk blocks, WAL records in their binary storage format. The replica ends up as an exact binary copy of the primary, identical right down to the internal page layout.

It's fast and simple, but inflexible. The replica must be the same PostgreSQL version as the primary, the same operating system in many cases, and you can't replicate partial data (specific tables only).

Where it's used

PostgreSQL streaming replication (the default) uses physical replication. The WAL records are raw storage-level change records. The replica applies them without knowing what the logical changes were — just "write these bytes to this disk location."

Primary WAL: [raw binary: page 1042, offset 24, bytes 0x4A3F...]

↓

Replica: applies raw binary to same page and offsetPhysical replicas in PostgreSQL are read-only standbys. You can run read queries on them, but they can't be a different PostgreSQL version, can't have extra tables, and can't selectively exclude tables.

✅ Use physical replication when:

- You want an exact standby for failover

- You need the simplest, fastest full-database replication

- All replicas run the same version and OS

❌ Limitations:

- Can't replicate specific tables only

- Replica must be same PostgreSQL major version

- No cross-database-version replication for upgrades

🔹 Logical Replication

Simple Explanation

Logical replication replicates data changes at a higher level — it sends the logical operations (INSERT, UPDATE, DELETE) rather than raw bytes. The replica receives "insert this row into this table" rather than "write these bytes to disk block 1042."

This makes it flexible: you can replicate specific tables, replicate to a different PostgreSQL version, or stream changes to a non-PostgreSQL system entirely.

PostgreSQL logical replication

-- On the primary: create a publication (what to replicate)

CREATE PUBLICATION my_publication FOR TABLE users, orders;

-- On the replica: create a subscription (where to receive)

CREATE SUBSCRIPTION my_subscription

CONNECTION 'host=primary_host dbname=mydb user=replicator'

PUBLICATION my_publication;The replica now receives all INSERTs, UPDATEs, and DELETEs on the users and orders tables. Other tables are not replicated. The replica can be a different PostgreSQL version. The replica can also have its own additional tables and indexes.

Use cases for logical replication

Logical replication enables patterns that physical replication can't:

Zero-downtime major version upgrades: run PostgreSQL 15 and 16 side by side. Logically replicate all data from 15 to 16. Switch traffic when ready. No downtime.

Selective sync: replicate only the tables a specific service needs. A reporting database can subscribe only to the tables relevant to its reports.

Fan-out to other systems: stream changes from PostgreSQL to Elasticsearch, Kafka, or a data warehouse by consuming the logical replication stream (this is how many CDC tools work).

Section 9 — Geo-Replication

🔹 Geo-Replication

Simple Explanation

Geo-replication extends replication across geographic regions — different data centres, different countries, different continents. The goal is to reduce latency for users worldwide (serve them from a nearby server) and survive regional failures (if one region goes down, others continue).

Analogy

A global streaming platform like Netflix doesn't serve content from one server in California. They replicate content to regional edge nodes worldwide. A user in Dhaka doesn't stream from California — they stream from a nearby regional server. Geo-replication does the same for databases.

The consistency challenge

Replication across regions introduces much higher latency than replication within a data centre. A round-trip between London and Singapore might be 170ms. Synchronous replication at that distance would add 170ms to every write — completely unacceptable for most applications.

As a result, cross-region replication is almost always asynchronous. That means replicas in other regions can be significantly behind the primary.

User in Singapore writes to → [Primary: London]

↓ async replication (~170ms delay)

[Replica: Singapore]

→ Singapore user reading immediately may see stale dataPostgreSQL geo-replication

PostgreSQL can stream WAL records across regions. Tools like Patroni can manage a multi-region replica set with one primary region. Some managed services (AWS Aurora Global Database, Google Cloud SQL) handle the cross-region replication transparently and optimise it at the infrastructure level.

MongoDB geo-replication

MongoDB replica sets can span regions by placing nodes in different availability zones or regions. Write concern and read preference settings control where writes go and where reads are served from:

// Write to primary in US, read from nearest (could be Asia)

db.collection('products').find({}).readPreference('nearest');

// Ensure write is confirmed in at least 2 data centres

db.collection('orders').insertOne(doc, {

writeConcern: { w: "majority", j: true }

});Google Spanner — the exception

Google Spanner achieves strong consistency across global regions using TrueTime — GPS and atomic clocks to bound clock uncertainty across data centres. Writes are synchronously replicated globally but Spanner accepts the latency cost (typically 5–10ms for regional writes, higher for global). It's the closest thing to ACID at global scale, at significant infrastructure cost.

Section 10 — Change Data Capture (CDC)

🔹 CDC

Simple Explanation

Change Data Capture is a technique for detecting and streaming every change that happens in a database — every INSERT, UPDATE, and DELETE — in real time, so other systems can react to those changes.

Instead of other services polling your database or you manually publishing events every time you write, CDC automatically taps into the database's internal change log and streams those changes out. Other systems consume the stream and do whatever they need to with it.

Analogy

Instead of checking your bank account balance every hour to see if anything changed, you sign up for transaction alerts. The bank notifies you the moment a transaction happens — you don't poll, you receive. CDC is your database publishing those alerts for every change it makes.

Mini Diagram

Application writes to PostgreSQL

↓

PostgreSQL WAL

↓

Debezium (CDC tool)

↓

Kafka topic

↙ ↓ ↘

Analytics Search Notifications

service index serviceHow CDC works under the hood

In PostgreSQL, CDC reads from the WAL using logical decoding. The WAL records every change anyway (for crash recovery and replication). CDC tools like Debezium attach as a logical replication client and decode those WAL records into structured change events.

In MongoDB, CDC reads from the oplog — the operations log that already drives replica set replication. MongoDB's Change Streams API wraps this:

// MongoDB Change Streams — native CDC

const changeStream = db.collection('orders').watch();

changeStream.on('change', (change) => {

console.log('Change detected:', change.operationType);

// operationType: 'insert', 'update', 'delete', 'replace'

console.log('Document:', change.fullDocument);

console.log('Changed fields:', change.updateDescription?.updatedFields);

});PostgreSQL CDC with Debezium

Debezium is the most widely used CDC tool for PostgreSQL. It connects as a logical replication client and publishes changes to Kafka topics.

-- Enable logical replication on PostgreSQL

-- postgresql.conf

wal_level = logical

max_replication_slots = 4

max_wal_senders = 4Each table change becomes a Kafka event:

{

"op": "u",

"before": { "id": 1, "status": "pending" },

"after": { "id": 1, "status": "shipped" },

"source": { "table": "orders", "ts_ms": 1700000000000 }

}

Downstream services consume this event stream and react — update a search index, send a notification, sync to a data warehouse, invalidate a cache.

What CDC enables

CDC is the backbone of several modern architectural patterns:

Event-driven microservices: services don't call each other's APIs to sync data. They publish changes via CDC and other services consume the stream. Decoupled, async, and resilient to downstream failures.

Zero-downtime migrations: CDC can stream all changes from an old database to a new one while the application is running. When the new database has caught up, switch traffic. No downtime.

Real-time analytics: instead of nightly ETL batch jobs that move data to a warehouse, CDC streams changes to the warehouse continuously. Dashboards update in near real-time.

Cache invalidation: when data changes in the database, CDC triggers a cache bust in Redis or Memcached. No more serving stale cached data after a database update.

The tools

- Debezium — open source, integrates with Kafka, supports PostgreSQL, MySQL, MongoDB, and more

- AWS DMS (Database Migration Service) — managed CDC with support for many database types

- Confluent CDC connectors — managed Kafka-based CDC

- Airbyte — open source data integration platform, supports CDC-based sources

✅ Use CDC when:

- Multiple services need to react to database changes

- You're building event-driven architecture

- You need real-time sync to search indexes, analytics, or caches

- You're migrating databases with zero downtime

❌ Be cautious when:

- You only have one consumer — a direct database call or event from the application is simpler

- Your team doesn't have the infrastructure to run and monitor a CDC pipeline

- The database doesn't have sufficient WAL or oplog retention for your consumer to catch up after downtime

Section 11 — Putting It All Together

Every concept in this post is a response to a specific scaling or reliability problem:

Single database server — works until it doesn't

Add read replicas → solve read scalability

↓

Replication lag appears → understand consistency tradeoffs

Leader goes down → need failover

Leader–follower → simple but single write point

Writes outgrow one node → need multi-leader or leaderless

Multi-leader → flexible but complex conflict resolution

Leaderless → high availability, eventual consistency

Users are global → geo-replication

Async across regions → replication lag is large

Sync across regions (Spanner) → expensive

Changes need to flow to other systems → CDC

WAL/oplog as event stream

Powers event-driven architecture, real-time analytics, cache invalidationHow These Concepts Appear in Real Systems

PostgreSQL Physical streaming replication is the default. Logical replication for selective sync and cross-version migrations. Patroni for automatic failover. Debezium for CDC. pg_auto_failover or repmgr as simpler alternatives to Patroni.

MongoDB Replica sets for all replication — primary + secondaries with automatic failover via Raft-based election. Read preference settings route reads to secondaries. Change Streams for native CDC without external tooling. Atlas supports multi-region clusters.

MySQL Semi-synchronous replication commonly used in production. GTID-based replication for reliable failover. Binlog-based CDC via Debezium. ProxySQL for connection pooling and read/write splitting across replicas.

Cassandra Leaderless, tunable consistency per query. Replication factor configured per keyspace. Geo-replication via multi-datacenter deployments with NetworkTopologyStrategy. No native CDC — CDC built on commitlog subscribers or third-party tools.

CockroachDB Distributed SQL with built-in consensus (Raft). No manual replication setup — every write is automatically replicated to multiple nodes. Multi-region support baked in, with configurable data domiciling for compliance. CDC via changefeeds to Kafka or cloud storage.

AWS Aurora Six-way replication across three availability zones, synchronous. Up to 15 read replicas. Aurora Global Database extends async replication across regions with <1 second typical lag. Managed failover under 30 seconds for regional failures.

Conclusion

Replication is where the gap between local development and production becomes obvious. Locally, you have one database, no lag, no failover concerns. In production, you're making constant tradeoffs between consistency, availability, latency, and complexity.

Here's the mental model to carry forward:

- Read replicas buy you read scalability. They come with replication lag — design your application to handle it.

- Async replication is fast but means potential data loss on failure. Sync replication is safe but slow. Most systems use async with sync to at least one replica.

- Leader–follower is the standard model. Simple and reliable, but the leader is a bottleneck and a single point of failure for writes.

- Failover is never instant. Design applications to retry on write failure during leader transitions.

- Multi-leader enables writes from multiple regions but conflict resolution is genuinely hard. Don't underestimate it.

- Leaderless (Cassandra, DynamoDB) gives you high write availability at the cost of strong consistency. Tune your quorum settings carefully.

- Physical replication is fast and exact. Logical replication is flexible and selective.

- Geo-replication reduces latency for global users but makes strong consistency nearly impossible without paying a latency cost.

- CDC turns your database into an event source. If your architecture has multiple systems that need to react to data changes, CDC is cleaner than polling or manual event publishing.

There's no universally correct replication strategy. The right one depends on your read/write ratio, latency requirements, consistency needs, and how much operational complexity your team can manage.